The channels are the same. Email, paid, organic social, events, SEO, and its newer cousin, AI-generated discovery. We have had roughly the same toolkit for five years. One could argue the only meaningful addition is AGO, and even that is an iteration on SEO.

So why does B2B marketing feel harder than it has ever been?

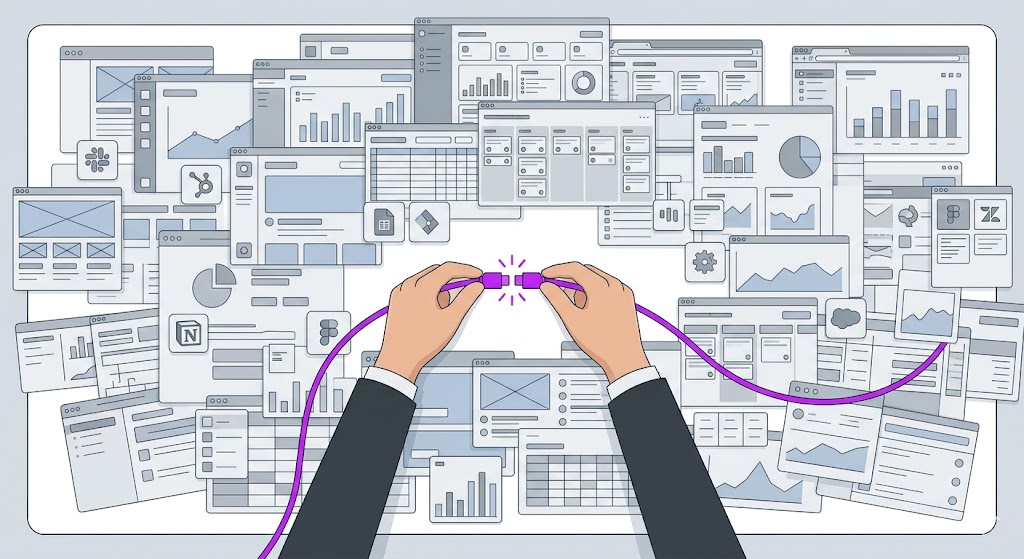

Because the work changed. Not the channels, not the tactics, but the connective tissue between them. Data used to come from one or two providers. Now there are dozens. Point solutions used to be a handful per category. Now there are hundreds, each with micro-vertical variations and subtle differences that matter. Almost every tool comes with an API or webhook. The ability to connect them technically exists. The ability to do it well, and maintain it, does not live on most marketing teams.

What emerged is a discipline that did not have a name five years ago: GTM engineering. The work of stitching data sources, point solutions, AI capabilities, and channel execution into a coherent, persistent system. It is not creative work. It is not traditional marketing operations. It is engineering applied to go-to-market, and most organizations have not recognized it as a distinct skill set, let alone staffed for it.

This piece lays out what changed, why the gap between expectations and capabilities keeps widening, and what leaders should actually be paying attention to.

TL;DR

- The B2B marketing toolkit has barely changed in five years. What changed is the engineering required to make it all work together.

- Three forces converged: data became ubiquitous, point solutions multiplied into the hundreds, and AI added a new layer of capability and expectation on top of both.

- The work of connecting these systems, what we are calling GTM engineering, requires technical skills that most marketing teams were never built to have.

- Marketing teams used to be 70-80% creative and 20-30% technical. The ratio needs to shift, but most organizations have not adjusted.

- Creatives have largely figured out AI. The gap is on the systems and orchestration side.

- Leadership pressure to “do more with less” and “use more AI” often misses the point entirely. The bottleneck is not effort or adoption. It is architecture.

- Influencer playbooks rarely transfer to in-house teams because they are built on agency economics: volume, wholesale pricing, and proprietary data you do not have access to.

- If your strategic inputs are wrong (positioning, ICP, messaging), no amount of engineering saves the output.

- Leaders need to either fund GTM engineering as a real capability, or stop expecting the results it produces.

Where the job changed

The barrier to creating things dropped. The barrier to connecting things went up.

The channels are the same. The engineering required to make them work together is completely different.

Creating things

Barrier dropped

WYSIWYG builders, AI drafting, design systems. One or two people now do the work of three or four.

The arc

HTML by hand WordPress + devs Drag-and-drop AI builds it for youConnecting things

Barrier went up

Dozens of data providers, hundreds of point solutions, AI as a layer on everything. Nothing connects automatically.

What it requires

API logic Data modeling Cross-system orchestration Ongoing maintenanceThat gap is where GTM engineering lives. It is not creative work and it is not traditional marketing operations. It is a new discipline, and most teams have not staffed for it.

1. The same toolkit, a completely different job

We still have email, paid, organic social, events, and SEO. But operating them is an entirely different discipline than it was five years ago.

Consider what happened in three areas simultaneously. Data went from one primary source (ZoomInfo, or before that, Data.com and InfoUSA) to dozens of providers. There are now specialty shops for SMB, enterprise, consumer, B2B, and micro-verticals within each. There are providers that stitch other providers together. Data is no longer scarce. It is ubiquitous. The constraint shifted from access to operationalization.

Point solutions followed the same pattern. Website visitor identification used to be one or two players. Now there are dozens, and they are not created equal. Some identify 10% of visitors, some claim 50-70%. The same explosion happened across ABM, signal management, intent scoring, ad management, and cold email infrastructure. Tools like Mailreef, Maildoso, Instantly, and many others offer warm-up capabilities, domain purchasing, inbox provisioning across Microsoft and Gmail. There are agencies maintaining glossaries of 100-plus sales and marketing tools that companies should be considering.

Then AI arrived, not as a single tool but as a layer that touches everything. Content generation, data enrichment, lead scoring, personalization, proposal creation, automation flows. Each application is useful. None of them connect to each other automatically.

What this means

The job of making all of this work together is not a marketing job in the traditional sense. It requires understanding data flows, API logic, semantic data modeling, and cross-system orchestration. Think about the arc of technical complexity: when MySpace came out, marketers had to learn a bit of HTML. Then WordPress, and you still needed developers. Then WYSIWYG builders, and you could drag and drop. Now you can talk to an agentic interface and it builds the code for you.

The barrier to creating things dropped. The barrier to connecting things went up. That gap is where GTM engineering lives.

Why it matters

Keeping up with these tools is a full-time job. You can spend weeks researching options in a single category and still not feel confident in your choice. Just for cold email infrastructure alone, there are dozens of options. Leadership often underestimates how much time and energy the evaluation process requires, let alone implementation and maintenance.

Operator moves

- Recognize that the job of connecting data, tools, and channels is now a distinct discipline. It is not something you bolt onto an existing marketing role.

- Distinguish between your core systems (CRM, marketing automation) and your point solutions. Core systems should be stable. Point solutions are where complexity and sprawl compound.

- Stop asking “why aren’t we using this tool?” unless you are also asking “who will integrate it, maintain it, and make sure it works with everything else?”

2. The team was built for a different era

Marketing teams used to be 70-80% creative and 20-30% technical. That ratio does not match the work anymore.

Team composition

The ratio does not match the work anymore.

Creatives figured out AI. The bottleneck moved to the systems side, and most teams never rebalanced.

How teams were built

70-80% creative

3-5 designers, 3-5 writers, a handful of technical roles. Most systems work outsourced to agencies.

Typical roles

Designers Writers Content managers Brand strategistsWhat the work requires

Rebalanced toward systems

AI amplified creative output. The bottleneck shifted to RevOps, systems architecture, and cross-tool orchestration.

Missing roles

GTM engineers Data architects Integration specialists Automation engineersVery few people have a marketing degree and the technical ability to orchestrate 15 integrated tools. It is not a training problem you solve in a weekend. It is a different hire.

You used to have three to five designers, three to five writers, and a handful of technical roles. Much of the technical work got outsourced to agencies. AI and design systems have changed the math on the creative side. One or two designers or writers can now do the work of three or four, not because they are working harder, but because the tools genuinely amplify output.

The creative team has probably figured out AI. They have likely tripled their output and are working more efficiently than leadership gives them credit for. They can make personalized videos in seconds, turn a conversation into a polished proposal, and create customized slide decks faster than anyone expected. That side of the house is not the bottleneck.

The bottleneck is on the other side. The RevOps, systems architecture, and go-to-market technology work that requires understanding data flows, API integrations, and cross-tool orchestration. A lot of marketers are creatives. Asking them to map webhooks and configure API integrations is like asking an engineer to write brand copy.

What this means

You have CEOs, CMOs, and VPs saying “we need to use more AI,” and you have writers and designers who have genuinely mastered these tools and are frustrated that leadership does not see it. Meanwhile, the same leadership is asking the systems side of the team to stitch together tools that require engineering-level skills. Both sides are being pushed to do more, but only one side actually has a skills gap, and it is not the one leadership is usually focused on.

Why it matters

Very few people have a marketing degree and the technical ability to orchestrate 15 integrated tools. It is not a training problem you solve in a weekend. The people who can do this work, the knowledge workers who understand systems, logic, and orchestration, are a different profile than most marketing teams were built around. And they are expensive and hard to find.

Operator moves

- Audit whether your team composition matches the work that actually needs to happen. If you are heavy on creative and light on systems, the imbalance will show up as underperformance that looks like a tactics problem but is actually a staffing problem.

- Stop pushing your creative team to “use more AI” without understanding what they are already doing. They are probably ahead of you.

- Consider whether you need to shift headcount from traditional roles into GTM engineering roles, or bring in external help specifically for integration and orchestration.

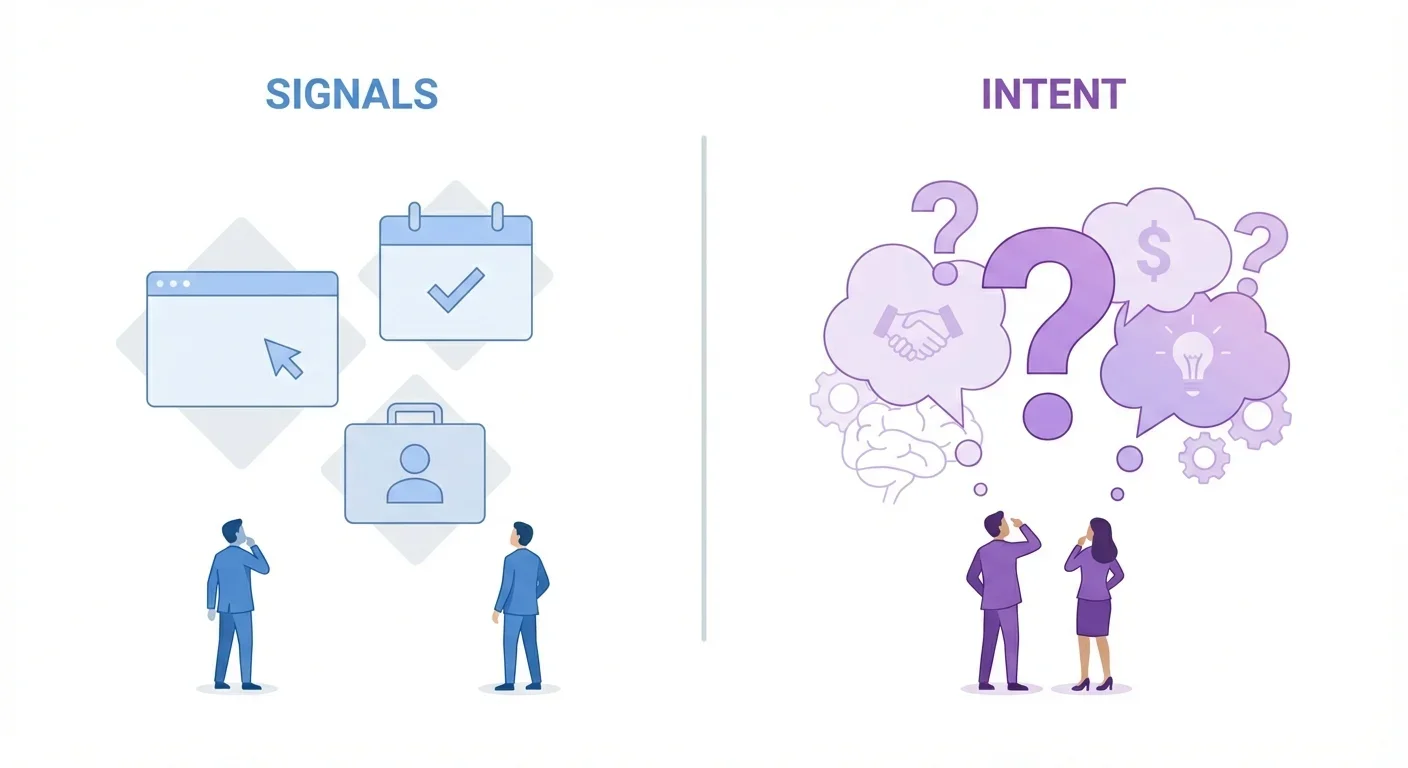

3. AI is being adopted in silos, not deployed as a system

The AI adoption problem is not effort. It is architecture.

AI adoption maturity

Most teams are stuck at level one. Level three is where the leverage is.

Individual adoption is not the same as organizational deployment. The gap between them is an engineering problem.

People are using AI effectively on their own. Creatives draft content, generate visuals, create personalized assets. Systems people use it for enrichment and scoring. Both sides have real wins.

ChatGPT for drafting AI image generation Personal workflowsEveryone is doing it independently, without shared context, shared tooling, or shared standards. There is no organizational system. Just individuals with their own workflows.

No shared context Duplicate work No standardsEveryone has access to the same context and the same toolkit. AI is deployed as infrastructure with standards, shared data, and investment. This is an engineering project, not an adoption initiative.

Shared context Common tooling Organizational standards Budget + ownerTelling your team to “use more AI” is about as useful as telling a sales team to “sell more.” If you want Level 3, treat it as an engineering initiative with a budget, timeline, and owner.

Most leaders are using AI as an advanced Google or a drafting assistant. They see the potential but do not have the criteria to evaluate what good AI usage looks like inside a marketing function. So they default to “use more AI,” which is about as useful as telling a sales team to “sell more.”

The reality is that a lot of people are using AI effectively. Creatives use it to draft content, generate visuals, and create personalized assets. Systems people use it for enrichment, scoring, and automation. Both sides have real wins. The problem is that everyone is doing it independently, without shared context, shared tooling, or shared standards. There is no organizational system. There are just individuals with their own workflows.

What this means

AI is contextual. A home services company might use it to customize tailored quotes by pulling unstructured data from dozens of distributors. A SaaS company might use it for rapid prototyping and product experiments. Those are completely different skill sets. The idea that “AI” is one thing you can tell people to use more of misses the point entirely.

And pretty soon we will stop saying “AI” as a separate capability at all. It is becoming such a baked-in utility to everything that it is like saying “knowing how to use a search engine” is a skill. The tools are changing daily. ChatGPT released two models in a single week. By the time you learn one thing, the ground has shifted.

Why it matters

If you want AI deployed as a system across the marketing function, where everyone has access to the same context and the same toolkit, that is a GTM engineering project. It requires infrastructure, standards, and investment. It is not something that happens because you told people to use more AI.

Operator moves

- Stop saying “use more AI” to your team. Instead, ask them what they are already doing and where they are hitting walls.

- If you want organizational AI adoption (not just individual usage), treat it as an engineering initiative with a budget, timeline, and owner.

- Accept that AI skill sets are contextual and industry-specific. There is no universal “AI skill” to hire for.

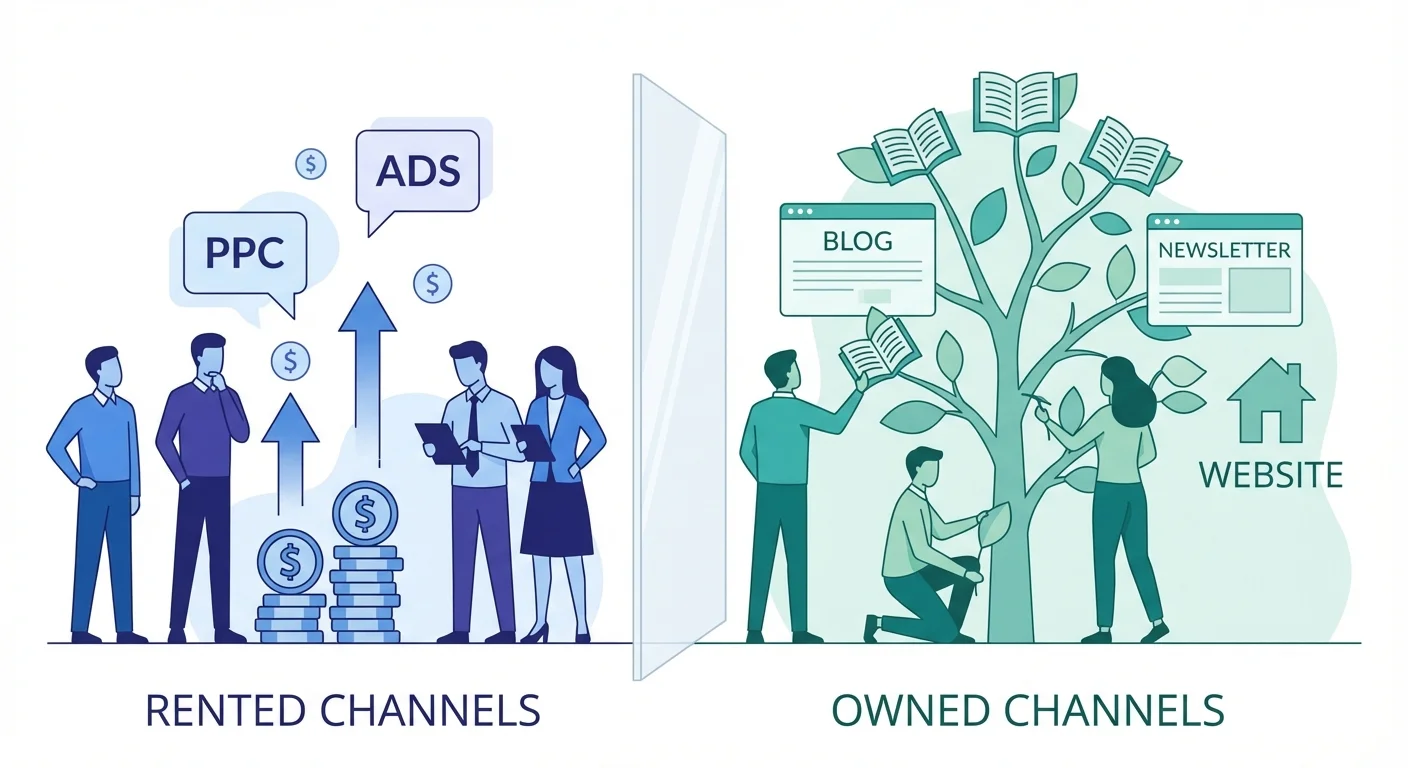

4. The influencer playbook does not transfer

When an influencer posts “I made $10 million MRR with this 27-tool stack,” understand the economics behind that claim before you try to replicate it.

The vast majority of marketing influencers come from the agency world. They have built and seen these systems 10, 50, or 150 times across dozens of clients. They have economy of scale and scope. Where you pay 4 cents per credit, they pay a fraction of a penny because of wholesale partnerships and volume. They have proprietary data lakes. They specialize in narrow niches, maybe growth-stage SaaS under $25 million, or home services (HVAC, roofing, renovations), where a single playbook works reliably.

Their results are real. Their context is not yours.

What this means

They are running programs across dozens of clients, which gives them testing volume your single in-house team cannot match. They have access to private pricing tiers, distributor relationships, and data resources that are not available at in-house scale. Their economics are fundamentally different.

Influencers do not post their losses. If one out of fifty thousand is posting a win, that is survivorship bias. When they do share something that did not work, it is usually framed as a rosy learning moment. And there is a timing problem. Our news feeds are so personalized now that your team may not have even seen the tool a leader is asking about. It is not that they dismissed it. It literally may not have crossed their screen.

Why it matters

Almost every time a leader forwards a LinkedIn post asking “why aren’t we using this?”, the team has already evaluated it, often multiple times, and excluded it for budget, redundancy, or capacity reasons. That tool is usually one of 75 things that were already considered. Shiny object syndrome is real, and it creates noise that distracts from the GTM engineering work that actually moves the needle.

Operator moves

- Treat influencer tool recommendations as research inputs, not validated playbooks.

- Before adopting someone else’s stack, understand their context: agency vs. in-house, their volume, their pricing, their niche. If any of those are fundamentally different from yours, the playbook will not transfer.

- Protect your team’s focus. Every “why aren’t we using this?” question that lands mid-sprint has a cost, even when the answer is “we already looked at it.”

Why playbooks don’t transfer

Their results are real. Their context is not yours.

Most influencer playbooks are built on agency economics that in-house teams cannot replicate.

What agencies have

What in-house teams face

The move

Treat influencer recommendations as research inputs, not validated playbooks.

Before adopting someone else’s stack, check whether their context (agency vs. in-house, volume, pricing, niche) matches yours. If any of those are fundamentally different, the playbook will not transfer. And protect your team’s focus: every forwarded LinkedIn post has a cost.

5. No amount of engineering fixes bad inputs

Garbage in, garbage out has not changed. It never will.

The three-legged stool

Teams spend enormous energy on two legs and treat the third as settled.

GTM engineering amplifies whatever you point it at. If the strategic inputs are wrong, better engineering just burns resources faster.

| Engine | What it covers | Typical investment | Impact if wrong |

|---|---|---|---|

| Creative engine | Content, design, brand, messaging execution. The visible output the market sees. | High | ●●●●● |

| Systems engine | Data, tools, orchestration, integrations. The connective tissue that makes channels work together. | Growing | ●●●●● |

| Strategic foundation | Positioning, ICP, messaging, offer. Who you are going after, what you are saying, and why it matters to them. | Often neglected | ●●●●● |

The biggest opportunity in all of this technology is often the boring stuff. Get the fundamentals right. Do the simple things well. A beautifully orchestrated GTM engine pointed at the wrong audience or the wrong message just burns resources faster.

You can have the most sophisticated GTM engineering stack in B2B. If your positioning is off, your ICP is wrong, or your messaging does not land, all of that machinery produces beautifully executed work that nobody responds to.

The three-legged stool has not changed. You need the creative engine (content, design, brand). You need the systems engine (data, tools, orchestration). And you need the strategic foundation: who you are going after, what your positioning is, and what your offer is. Teams spend enormous energy on the first two legs and treat the third one as settled when it often is not.

What this means

I see teams give up too soon on positioning and messaging. They test something for four to six weeks, do not see results, and move on. They assume the machinery is the problem when it is often the inputs. Product-market fit, messaging, and ICP are upstream of everything. Getting them wrong means the creative team and the systems team are both spinning their wheels, and no tool or integration fixes that.

Why it matters

The biggest opportunity in all of this technology is often the boring stuff. Get the fundamentals right. Do the simple things well. The tools become useful only after the foundation is solid. AI can speed things up, but it can also speed up the wrong thing. A beautifully orchestrated GTM engine pointed at the wrong audience or the wrong message just burns resources faster.

Operator moves

- Before adding a new tool or capability, ask whether your strategic inputs are right. If positioning, messaging, or ICP are unclear or untested, fix those first.

- Give messaging enough time to generate real signal. Four to six weeks is almost never enough in high-consideration B2B.

- When performance is not where you want it, check the inputs before blaming the machinery.

6. Either fund GTM engineering or stop expecting the results

You either set a budget of both time and money to build GTM engineering as a capability, or you do not expect it to happen. There is no middle ground.

Telling a team to “do more with less” or “go figure out these tools on nights and weekends” is not a strategy. It is a hope. The innovation budget for most marketing teams used to be 5 to 10% of total spend. Given the pace of change in data, tools, and AI, it may need to be 20 to 30% for the next few years.

Experimentation has a real cost: tool subscriptions, training time, implementation effort, and the inevitable dead ends. Someone on your team might build a 42-step automation flow in Make or n8n that never gets used. That is not waste. That is the cost of learning. If leadership is not willing to fund that explicitly, they should not expect the team to innovate on top of their existing workload.

What this means

The market genuinely requires upskilling, but “AI” is not a skill you can just add. Every application is contextual, the tools change faster than anyone can learn them, and the skill set your company needs depends on your market, your product, and your GTM motion. You may need to shuffle the team: reduce headcount in one role and add it in another. You may need to bring in agencies or consultancies specifically for tool evaluation and systems integration.

Why it matters

The teams that pull ahead will be the ones whose leadership recognized GTM engineering as a real discipline, funded it properly, and gave it time to compound. Not the ones that told their existing team to figure it out between campaigns.

Operator moves

- Make your experimentation budget explicit. Include both money (tool subscriptions, vendor evaluations) and time (allocated capacity for research and implementation).

- Accept that some experiments will fail. A tool evaluation that ends in “not for us” is not wasted effort. It is a decision.

- If you cannot allocate budget for GTM engineering, be honest about it. Do not punish the team for not delivering results that require capabilities you have not funded.

- Consider whether agencies or consultancies should handle the engineering and evaluation work, especially in the near term while you figure out what the right internal team looks like.

Leaders should put boundaries around what they expect right now. The environment is different than it has ever been, and it is changing faster than anyone can track. Either invest in the capability to navigate it, or accept the pace you are at. The only bad option is expecting the world while funding the status quo.

Context on Outkeep’s Approach

Outkeep operates at the intersection of these tensions. We build for B2B marketing teams that are dealing with the complexity of data, deliverability, and multi-system orchestration, and we see firsthand how quickly the gap between tooling capability and team capacity can widen. Our perspective comes from living inside the GTM engineering problem, not observing it from the outside.

FAQ for Modern B2B Marketing Teams

What is GTM engineering?

GTM engineering is the work of connecting data sources, point solutions, AI capabilities, and channel execution into a coherent, persistent system. It requires technical skills (APIs, data flows, automation logic) applied to go-to-market problems. It is distinct from traditional marketing operations and from creative marketing work.

Has the B2B marketing toolkit actually changed?

The core channels have not. Email, paid, organic social, events, and SEO are the same toolkit from five years ago. What changed is the operational and engineering complexity required to make them work together effectively.

Why does leadership keep saying “use more AI” when the team is already using it?

Most AI adoption happens at the individual level. Creatives are usually well ahead of what leadership realizes. The gap is not effort or adoption. It is that AI is being used in silos rather than deployed as an organizational system, which requires engineering investment.

Should we replicate an influencer’s tool stack?

Almost never directly. Most marketing influencers operate from agency economics with volume pricing, proprietary data, and testing scale that in-house teams cannot match. Treat their recommendations as research inputs, not playbooks.

How much should we budget for GTM engineering experimentation?

Innovation budgets used to be 5-10% of marketing spend. Given the current pace of change, 20-30% may be more appropriate for the next few years, including both tool costs and allocated team time.

Do we need to hire differently?

Probably. Marketing teams built around 70-80% creative roles need to rebalance toward systems and orchestration capabilities. The people who can do GTM engineering work are a different profile than traditional marketing hires.

What should we fix before investing in more tools?

Your strategic inputs: positioning, ICP, messaging, and offer. GTM engineering amplifies whatever you point it at. If the inputs are wrong, better engineering just burns resources faster.

How long should we test before deciding something does not work?

In high-consideration B2B, four to six weeks is rarely enough. Plan for at least one full sales cycle before making a judgment. Most teams quit too early, before the approach has had time to generate real signal.